IBM Capstone Data Engineering Project

This project explored several data engineering technologies, concepts and skills that I acquired while completing the IBM Data Engineering Professional Certificate. You can find all the screenshots and scripts pertaining to this project on GitHub.

Data Platform Architecture and OLTP Database

- Designed and implemented a data platform using MySQL as an OLTP database, and another using MongoDB.

PostgreSQL Data Warehouse

Data analytics and ibm cognos dashboards.

- Loaded the data into IBM Cognos Analytics and created dashboards.

ETL & Data Pipeline (Airflow, Python and Bash)

- Automated the process of loading data from MySQL to a PostgreSQL data warehouse.

- Used Airflow to create a pipeline that analyzes web server logs, extracts the required lines and fields, transforms and loads the data.

Big Data Analytics with PySpark

- Used PySpark and data from a webserver to analyze search terms, and loaded a pretrained sales forecasting model to predict the forecast for a future year based on given sales data.

Below is a summary of some of the tasks I performed and some of the screenshots I took during the project.

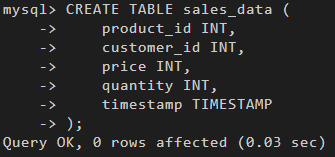

In the first section of the project, I created a table on MySQL for sales data. And then I inserted sales data from a sales_data.sql into the table. I also queried the table, performed operations and exported the data.

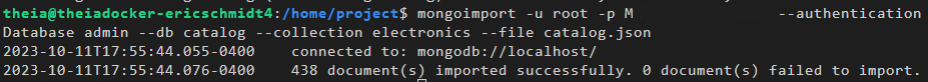

I performed a similar operation with another database in MongoDB. I imported a file into it, performed queries, created an index to improve query performance and exported the database.

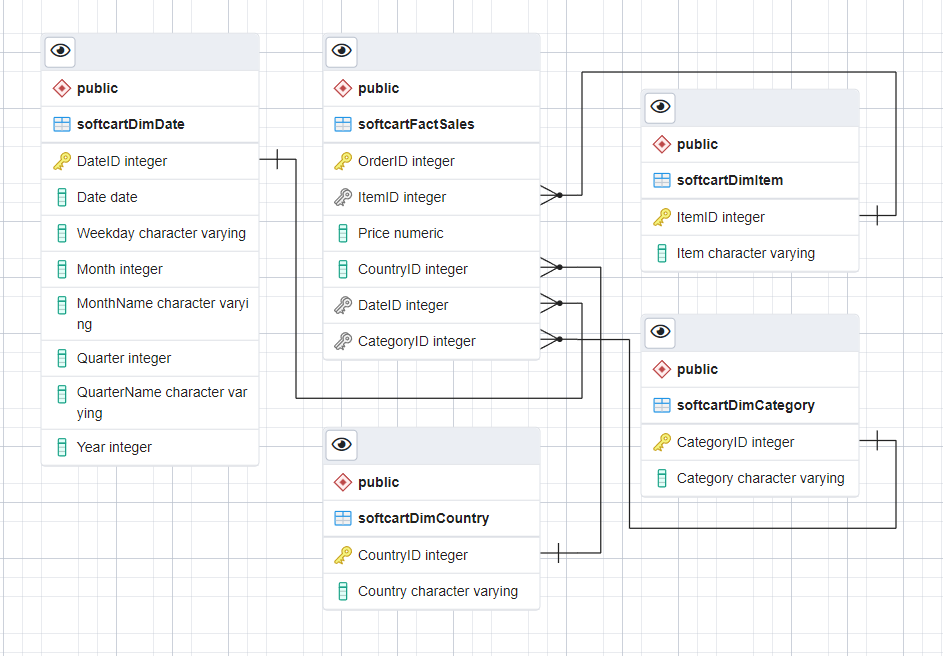

I also designed and created a star schema for a database which was supposed to hold ecommerce data on PostgreSQL. Then I performed several queries on the database, from simple select queries to groupingsets, cubes, rollups and created a materialized view.

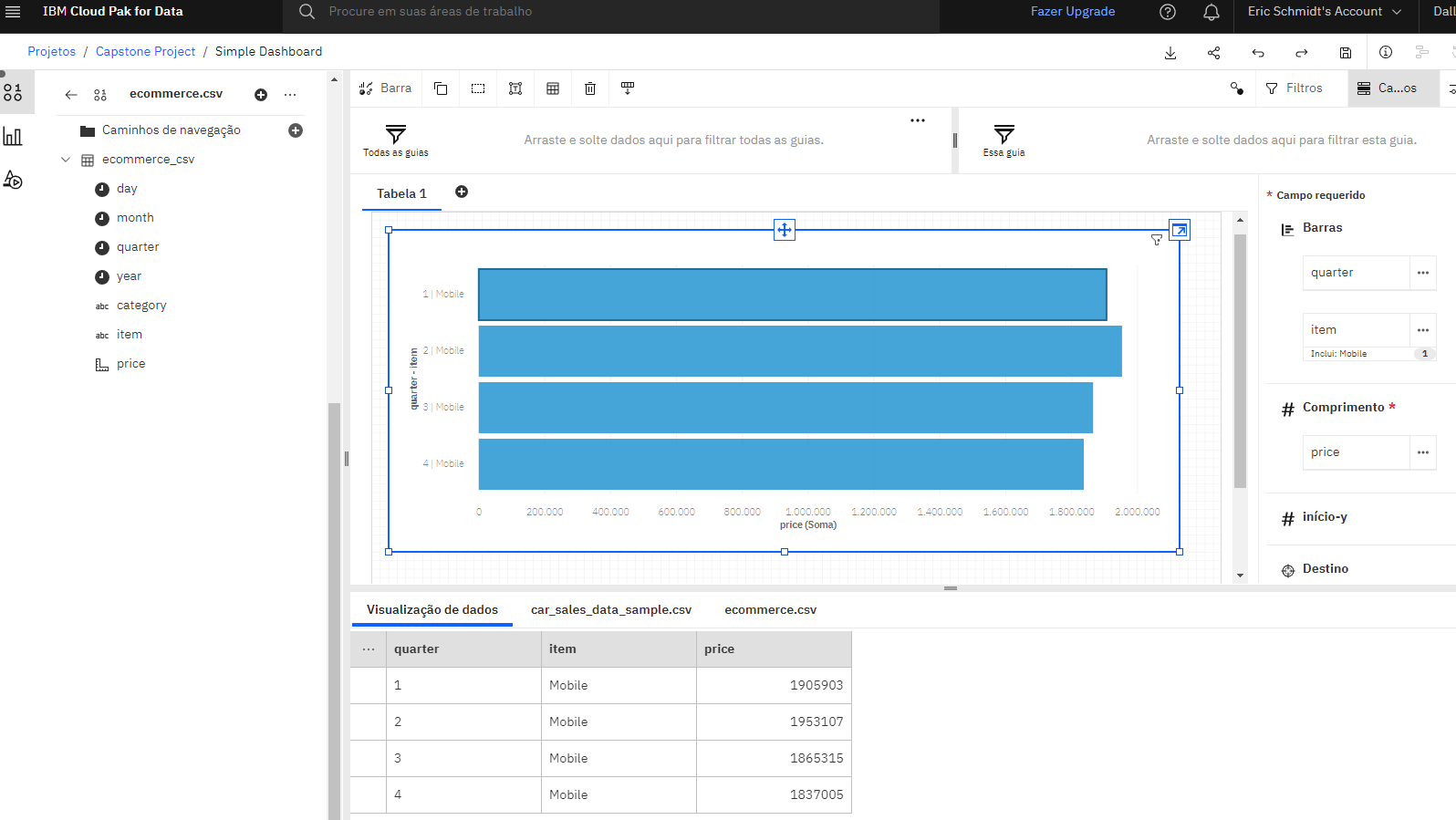

I imported a dataset into IBM Cognos Dashboards and created dashboards such as a bar graph to show mobile phone sales in each quarter, a line graph to show sales for each month of 2022, and a pie chart to show sales for three product categories.

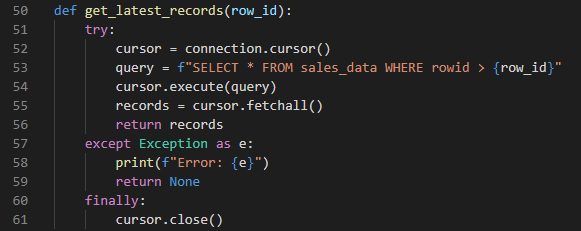

I automated the process of retrieving the latest records from a MySQL table and inserting them into a PostgreSQL data warehouse. Below are the Python functions that fetch the records, insert them and the output I got after executing the script.

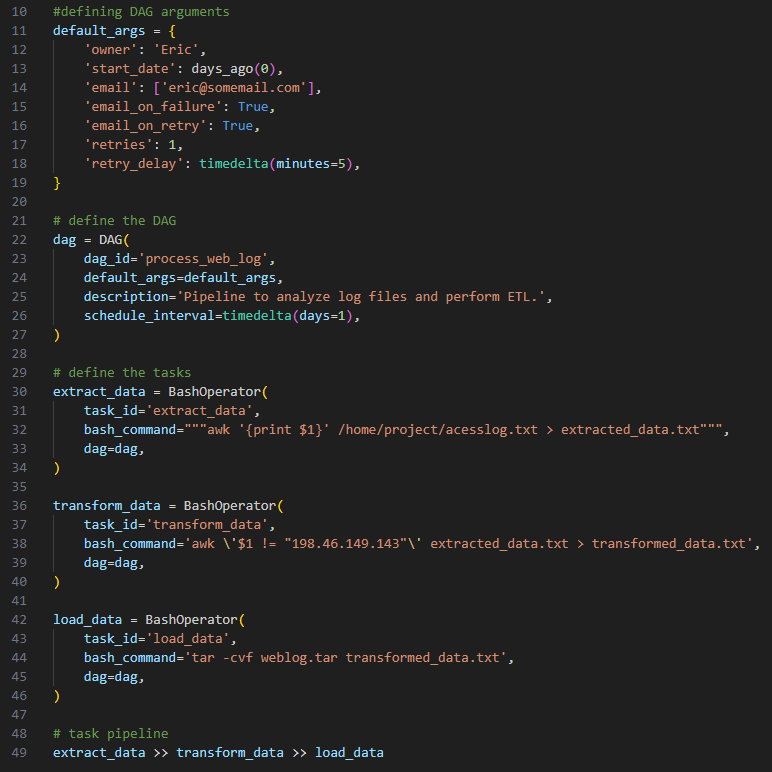

I used Airflow to create a data pipeline that extracts specific IP addresses from a access log file and loads them into a destination file.

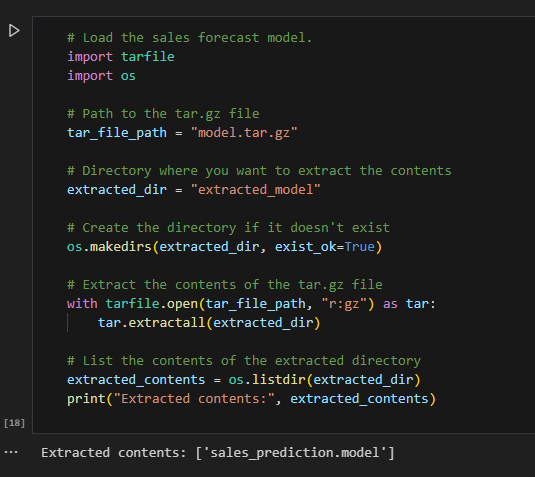

I used PySpark to load a sales prediction model, apply it to a sales data set, and predict the sales for the year 2023.

Brandon Lee Tran

IBM Data Engineering Capstone Project

In this IBM sponsored project, I assumed the role of a Junior Data Engineer who has recently joined a fictional online e-Commerce company named SoftCart. Presented with real-world use cases, I was required to apply a number of industry standard data engineering solutions.

- Demonstrate proficiency in skills required for an entry-level data engineering role

- Design and implement various concepts and components in the data engineering lifecycle such as data repositories

- Showcase working knowledge with relational databases, NoSQL data stores, big data engines, data warehouses, and data pipelines

- Apply skills in Linux shell scripting, SQL, and Python programming languages to Data Engineering problems

Project Outline

- SoftCart’s online presence is primarily through its website, which customers access using a variety of devices like laptops, mobiles and tablets.

- All the catalog data of the products is stored in the MongoDB NoSQL server.

- All the transactional data like inventory and sales are stored in the MySQL database server.

- SoftCart’s webserver is driven entirely by these two databases.

- Data is periodically extracted from these two databases and put into the staging data warehouse running on PostgreSQL.

- Production data warehouse is on the cloud instance of IBM DB2 server.

- BI teams connect to the IBM DB2 for operational dashboard creation. IBM Cognos Analytics is used to create dashboards.

- SoftCart uses Hadoop cluster as it big data platform where all the data collected for analytics purposes.

- Spark is used to analyse the data on the Hadoop cluster.

- To move data between OLTP, NoSQL and the dataware house ETL pipelines are used and these run on Apache Airflow.

Tools/Software

- OLTP Database – MySQL

- NoSql Database – MongoDB

- Production Data Warehouse – DB2 on Cloud

- Staging Data Warehouse – PostgreSQL

- Big Data Platform – Hadoop

- Big Data Analytics Platform – Spark

- Business Intelligence Dashboard – IBM Cognos Analytics

- Data Pipelines – Apache Airflow

- Top Courses

Data Engineering Capstone Project

This course is part of IBM Data Engineering Professional Certificate

Taught in English

Some content may not be translated

Instructors: Rav Ahuja +1 more

Instructors

Instructor ratings.

We asked all learners to give feedback on our instructors based on the quality of their teaching style.

Sponsored by FutureX

10,710 already enrolled

(95 reviews)

Recommended experience

Advanced level

Complete all prior courses in the IBM Data Engineering Professional Certificate.

What you'll learn

Demonstrate proficiency in skills required for an entry-level data engineering role.

Design and implement various concepts and components in the data engineering lifecycle such as data repositories.

Showcase working knowledge with relational databases, NoSQL data stores, big data engines, data warehouses, and data pipelines.

Apply skills in Linux shell scripting, SQL, and Python programming languages to Data Engineering problems.

Skills you'll gain

- Data Management

- Data Visualization Software

- Data Visualization

Details to know

Add to your LinkedIn profile

See how employees at top companies are mastering in-demand skills

Build your Data Management expertise

- Learn new concepts from industry experts

- Gain a foundational understanding of a subject or tool

- Develop job-relevant skills with hands-on projects

- Earn a shareable career certificate from IBM

Earn a career certificate

Add this credential to your LinkedIn profile, resume, or CV

Share it on social media and in your performance review

There are 7 modules in this course

Showcase your skills in this Data Engineering project! In this course you will apply a variety of data engineering skills and techniques you have learned as part of the previous courses in the IBM Data Engineering Professional Certificate.

You will demonstrate your knowledge of Data Engineering by assuming the role of a Junior Data Engineer who has recently joined an organization and be presented with a real-world use case that requires architecting and implementing a data analytics platform. In this Capstone project you will complete numerous hands-on labs. You will create and query data repositories using relational and NoSQL databases such as MySQL and MongoDB. You’ll also design and populate a data warehouse using PostgreSQL and IBM Db2 and write queries to perform Cube and Rollup operations. You will generate reports from the data in the data warehouse and build a dashboard using Cognos Analytics. You will also show your proficiency in Extract, Transform, and Load (ETL) processes by creating data pipelines for moving data from different repositories. You will perform big data analytics using Apache Spark to make predictions with the help of a machine learning model. This course is the final course in the IBM Data Engineering Professional Certificate. It is recommended that you complete all the previous courses in this Professional Certificate before starting this course.

Data Platform Architecture and OLTP Database

In this module, you will design a data platform that uses MySQL as an OLTP database. You will be using MySQL to store the OLTP data.

What's included

2 videos 2 quizzes 1 app item 2 plugins

2 videos • Total 5 minutes

- Introduction to Capstone Project • 4 minutes • Preview module

- Assignment Overview • 1 minute

2 quizzes • Total 22 minutes

- Checklist: OLTP Database • 10 minutes

- Graded Quiz: OLTP Database • 12 minutes

1 app item • Total 30 minutes

- Hands-on Lab: OLTP Database • 30 minutes

2 plugins • Total 15 minutes

- Data Platform Architecture • 10 minutes

- OLTP Database Requirements and Design • 5 minutes

Querying Data in NoSQL Databases

In this module, you will design a data platform that uses MongoDB as a NoSQL database. You will use MongoDB to store the e-commerce catalog data.

1 video 2 quizzes 1 app item

1 video • Total 1 minute

- Assignment Overview: Querying Data in NoSQL Databases • 1 minute • Preview module

2 quizzes • Total 25 minutes

- Checklist: Querying Data in NoSQL Databases • 10 minutes

- Graded Quiz: Querying Data in NoSQL Databases • 15 minutes

- Hands-on Lab: Querying Data in NoSQL Databases • 30 minutes

Build a Data Warehouse

In this module you will design and implement a data warehouse and you will then generate reports from the data in the data warehouse.

2 videos 1 reading 3 quizzes 3 app items 1 plugin

2 videos • Total 4 minutes

- Assignment Overview: Data Warehouse Design & Setup • 2 minutes • Preview module

- Assignment Overview: Data Warehouse Reporting • 1 minute

1 reading • Total 1 minute

- Optional Lab Information • 1 minute

3 quizzes • Total 45 minutes

- Checklist: Data Warehousing • 14 minutes

- Checklist: Data Warehouse Reporting • 16 minutes

- Graded Quiz: Data Warehouse & Reporting • 15 minutes

3 app items • Total 180 minutes

- Hands-on Lab: Data Warehousing • 60 minutes

- Hands-on Lab: Data Warehouse Reporting using PostgreSQL • 60 minutes

- (Optional) Obtain IBM Cloud Feature Code and Activate Trial Account • 60 minutes

1 plugin • Total 30 minutes

- (Optional) Hands-on Lab: Data Warehouse Reporting using DB2 • 30 minutes

Data Analytics

In this module, you will assume the role of a data engineer at an e-commerce company. Your company has finished setting up a data warehouse. Now you are assigned the responsibility to design a reporting dashboard that reflects the key metrics of the business.

1 video 2 quizzes 1 plugin

- Assignment Overview • 1 minute • Preview module

2 quizzes • Total 27 minutes

- Checklist: Dashboard Creation • 12 minutes

- Graded Quiz: Dashboard Creation • 15 minutes

- Hands-On Lab: Dashboard Creation • 30 minutes

ETL & Data Pipelines

In this module, you will use the given python script to perform various ETL operations that move data from RDBMS to NoSQL, NoSQL to RDBMS, and from RDBMS, NoSQL to the data warehouse. You will write a pipeline that analyzes the web server log file, extracts the required lines and fields, transforms and loads data.

2 videos 3 quizzes 2 app items

- Assignment Overview: ETL • 2 minutes • Preview module

- Assignment Overview: Data Pipelines using Apache Airflow • 1 minute

3 quizzes • Total 39 minutes

- Checklist: ETL • 6 minutes

- Checklist: Data Pipelines using Apache Airflow • 18 minutes

- Graded Quiz: ETL & Data Pipelines using Apache Airflow • 15 minutes

2 app items • Total 90 minutes

- Hands-on Lab: ETL • 60 minutes

- Hands-on Lab: Data Pipelines using Apache Airflow • 30 minutes

Big Data Analytics with Spark

In this module, you will use the data from a webserver to analyse search terms. You will then load a pretrained sales forecasting model and predict the sales forecast for a future year.

1 video 2 quizzes 2 app items

- Assignment Overview: Big Data Analytics with Spark • 0 minutes • Preview module

2 quizzes • Total 29 minutes

- Checklist: Big Data Analytics with Spark • 14 minutes

- Graded Quiz: Big Data Analytics with Spark • 15 minutes

2 app items • Total 60 minutes

- Practice Hands On Lab: Saving and loading a SparkML model • 30 minutes

- Hands-on Lab: SparkML Ops • 30 minutes

Final Submission and Peer Review

In this final module you will complete your submission of screenshots from the hands-on labs for your peers to review. Once you have completed your submission you will then review the submission of one of your peers and grade their submission.

2 readings 1 peer review

2 readings • Total 3 minutes

- Congrats & Next Steps • 2 minutes

- Thanks from the Course Team • 1 minute

1 peer review • Total 120 minutes

- Submit your Work and Review your Peers • 120 minutes

IBM is the global leader in business transformation through an open hybrid cloud platform and AI, serving clients in more than 170 countries around the world. Today 47 of the Fortune 50 Companies rely on the IBM Cloud to run their business, and IBM Watson enterprise AI is hard at work in more than 30,000 engagements. IBM is also one of the world’s most vital corporate research organizations, with 28 consecutive years of patent leadership. Above all, guided by principles for trust and transparency and support for a more inclusive society, IBM is committed to being a responsible technology innovator and a force for good in the world. For more information about IBM visit: www.ibm.com

Why people choose Coursera for their career

Learner reviews

Showing 3 of 95

Reviewed on Mar 10, 2024

The Capstone was a bit of an anticlimax. I was expecting a very challenging Capstone, but found a "follow the instructions" approach which made it seem too simple. I'm not complaining ;-)

Reviewed on Mar 18, 2023

I enjoyed having to go back and revise the other courses in the specialization. I had forgotten how interesting they were.

Recommended if you're interested in Information Technology

ETL and Data Pipelines with Shell, Airflow and Kafka

Getting Started with Data Warehousing and BI Analytics

Python Project for Data Engineering

Introduction to NoSQL Databases

Open new doors with Coursera Plus

Unlimited access to 7,000+ world-class courses, hands-on projects, and job-ready certificate programs - all included in your subscription

Advance your career with an online degree

Earn a degree from world-class universities - 100% online

Join over 3,400 global companies that choose Coursera for Business

Upskill your employees to excel in the digital economy

Instantly share code, notes, and snippets.

RithikaJ / M4DataVisualization-lab (1).ipynb

- Download ZIP

- Star 0 You must be signed in to star a gist

- Fork 0 You must be signed in to fork a gist

- Embed Embed this gist in your website.

- Share Copy sharable link for this gist.

- Clone via HTTPS Clone using the web URL.

- Learn more about clone URLs

- Save RithikaJ/1a9aca0cb0cedce6532fac83f39813af to your computer and use it in GitHub Desktop.

IMAGES

VIDEO

COMMENTS

Demonstrate proficiency in skills required for an entry-level data engineering role. Design and implement various concepts and components in the data engineering lifecycle such as data repositories. Showcase working knowledge with relational databases, NoSQL data stores, big data engines, data warehouses, and data pipelines.

In this Capstone project, you will: Collect and understand data from multiple sources. Design a database and data warehouse. Analyze the data and create a dashboard. Extract data from OLTP, NoSQL and MongoDB databases, transform it, and load it into the data warehouse. Create an ETL pipeline and deploy machine learning models.

In IBM Data Engineering Capstone Project, I'll step into the shoes of a Junior Data Engineer at SoftCart, a fictional online e-Commerce company. This project offers a real-world scenario requiring the application of various data engineering techniques and technologies to solve business-related data challenges.

Below is a summary of some of the tasks I performed and some of the screenshots I took during the project. In the first section of the project, I created a table on MySQL for sales data. And then I inserted sales data from a sales_data.sql into the table. I also queried the table, performed operations and exported the data.

The Capstone project is divided into 6 Modules: In Module 1, you will design the OLTP database for an E-Commerce website, populate the OLTP Database with the data provided and automate the export of the daily incremental data into the data warehouse. In Module 2, you will set up a NoSQL database to store the catalogue data for an E-Commerce ...

Production data warehouse is on the cloud instance of IBM DB2 server. BI teams connect to the IBM DB2 for operational dashboard creation. IBM Cognos Analytics is used to create dashboards. SoftCart uses Hadoop cluster as it big data platform where all the data collected for analytics purposes. Spark is used to analyse the data on the Hadoop ...

Demonstrate proficiency in skills required for an entry-level data engineering role; Design and implement various concepts and components in the data engineering lifecycle such as data repositories; Showcase working knowledge with relational databases, NoSQL data stores, big data engines, data warehouses, and data pipelines

IBM Capstone Data Engineering Project Overview. This project explored several data engineering technologies, concepts and skills that I acquired while completing the IBM Data Engineering Professional Certificate. You can find all the screenshots and scripts pertaining to this project on GitHub.

In this Capstone project you will complete numerous hands-on labs. You will create and query data repositories using relational and NoSQL databases such as MySQL and MongoDB. You'll also design and populate a data warehouse using PostgreSQL and IBM Db2 and write queries to perform Cube and Rollup operations.

This Capstone project will require that you apply and sharpen the skills and knowledge you developed in the various courses in the IBM Data Engineering Professional Certificate and utilize multiple tools and technologies to design databases, collect data from multiple sources, extract, transform and load data into a data warehouse, and utilize ...

This Capstone Project is designed for you to apply and demonstrate your Data Engineering skills and knowledge in SQL, NoSQL, RDBMS, Bash, Python, ETL, Data Warehousing, BI tools and Big Data. 6 weeks. 2-3 hours per week. Self-paced. Progress at your own speed. Free.

This credential earner has demonstrated a foundational knowledge of data engineering. The earner has implemented various concepts in the data engineering lifecycle and gained a working knowledge of Python, Relational Databases, NoSQL Data Stores, Big Data Engines, Data Warehouses, and Data Pipelines. The earner has demonstrated the skills required for an entry-level data engineering role.

Week 1 data collection. My first task is to gather a list of the most in-demand programming skills from job advertising, training websites, and polls, among other sources. In order to gather data in many formats like .CSV files, Excel sheets, and databases, I will start by scraping internet websites and using APIs.

This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository. main

The capstone project is strategically divided into several stages, each focusing on a specific set of data engineering tasks: Transactional Database Setup with MySQL

Objectives. Demonstrate proficiency in skills required for an entry-level data engineering role. Design and implement various concepts and components in the data engineering lifecycle such as data repositories. Showcase working knowledge with relational databases, NoSQL data stores, big data engines, data warehouses, and data pipelines.

There are 5 modules in this course. In this course you will apply a variety of data warehouse engineering skills and techniques you have learned as part of the previous courses in the IBM Data Warehouse Engineer Professional Certificate. You will assume the role of a Junior Data Engineer who has recently joined the organization and be presented ...

This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository. main

In this Capstone project you will complete numerous hands-on labs. You will create and query data repositories using relational and NoSQL databases such as MySQL and MongoDB. You'll also design and populate a data warehouse using PostgreSQL and IBM Db2 and write queries to perform Cube and Rollup operations. You will generate reports from the ...

IBM Data Analyst Capstone Project: Week 4 Data Visualization · GitHub. Instantly share code, notes, and snippets.

Contribute to AbhiramAv/IBM-Data-Analyst-Capstone-Project development by creating an account on GitHub.

Regarding Capstone project for IBM . Contribute to Shubhday/IBM-Data-Analyst development by creating an account on GitHub.

Jupyter Notebook 100.0%. Contribute to DerBaller/IBM-Data-Analyst-Capstone-Project- development by creating an account on GitHub.

IBM: DevOps and Software Engineering Capstone Project. In this DevOps Capstone Project, you'll demonstrate your skills and knowledge gained throughout this program with a real-world inspired hands-on project developing and deploying an application using CI/CD to showcase in your portfolio. 5 weeks. 8-10 hours per week. Self-paced.