- Machine Learning Tutorial

- Data Analysis Tutorial

- Python - Data visualization tutorial

- Machine Learning Projects

- Machine Learning Interview Questions

- Machine Learning Mathematics

- Deep Learning Tutorial

- Deep Learning Project

- Deep Learning Interview Questions

- Computer Vision Tutorial

- Computer Vision Projects

- NLP Project

- NLP Interview Questions

- Statistics with Python

- 100 Days of Machine Learning

- SEMMA Model

- Azure Virtual Machine for Machine Learning

- Orthogonal Projections

- CNN | Introduction to Padding

- "Hello World" Smart Contract in Remix-IDE

- Brain storm optimization

- Cubic spline Interpolation

- The Ultimate Guide to Quantum Machine Learning - The next Big thing

- Power BI - Timeseries, Aggregation, and Filters

- ML | Naive Bayes Scratch Implementation using Python

- Kullback-Leibler Divergence

- Well posed learning problems

- How L1 Regularization brings Sparsity`

- Hierarchical clustering using Weka

- FastText Working and Implementation

- ML - Candidate Elimination Algorithm

- Open AI GPT-3

- Differentiate between Support Vector Machine and Logistic Regression

- Introduction to Speech Separation Based On Fast ICA

Hypothesis in Machine Learning

The concept of a hypothesis is fundamental in Machine Learning and data science endeavors. In the realm of machine learning, a hypothesis serves as an initial assumption made by data scientists and ML professionals when attempting to address a problem. Machine learning involves conducting experiments based on past experiences, and these hypotheses are crucial in formulating potential solutions.

It’s important to note that in machine learning discussions, the terms “hypothesis” and “model” are sometimes used interchangeably. However, a hypothesis represents an assumption, while a model is a mathematical representation employed to test that hypothesis. This section on “Hypothesis in Machine Learning” explores key aspects related to hypotheses in machine learning and their significance.

A hypothesis in machine learning is the model’s presumption regarding the connection between the input features and the result. It is an illustration of the mapping function that the algorithm is attempting to discover using the training set. To minimize the discrepancy between the expected and actual outputs, the learning process involves modifying the weights that parameterize the hypothesis. The objective is to optimize the model’s parameters to achieve the best predictive performance on new, unseen data, and a cost function is used to assess the hypothesis’ accuracy.

What is Hypothesis Testing?

Researchers must consider the possibility that their findings could have happened accidentally before interpreting them. The systematic process of determining whether the findings of a study validate a specific theory that pertains to a population is known as hypothesis testing.

To assess a hypothesis about a population, hypothesis testing is done using sample data. A hypothesis test evaluates the degree of unusualness of the result, determines whether it is a reasonable chance variation, or determines whether the result is too extreme to be attributed to chance.

How does a Hypothesis work?

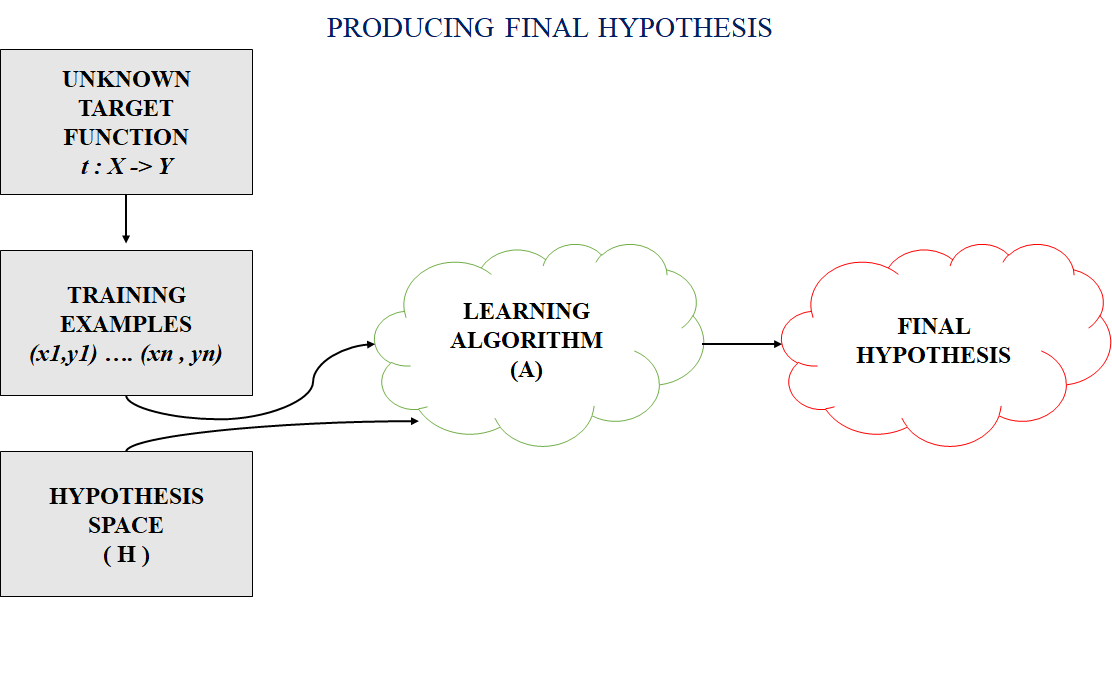

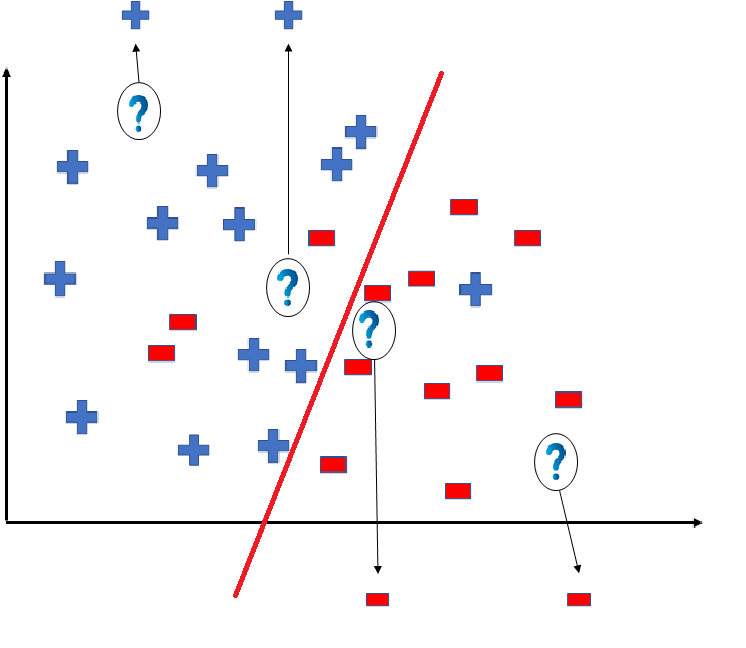

In most supervised machine learning algorithms, our main goal is to find a possible hypothesis from the hypothesis space that could map out the inputs to the proper outputs. The following figure shows the common method to find out the possible hypothesis from the Hypothesis space:

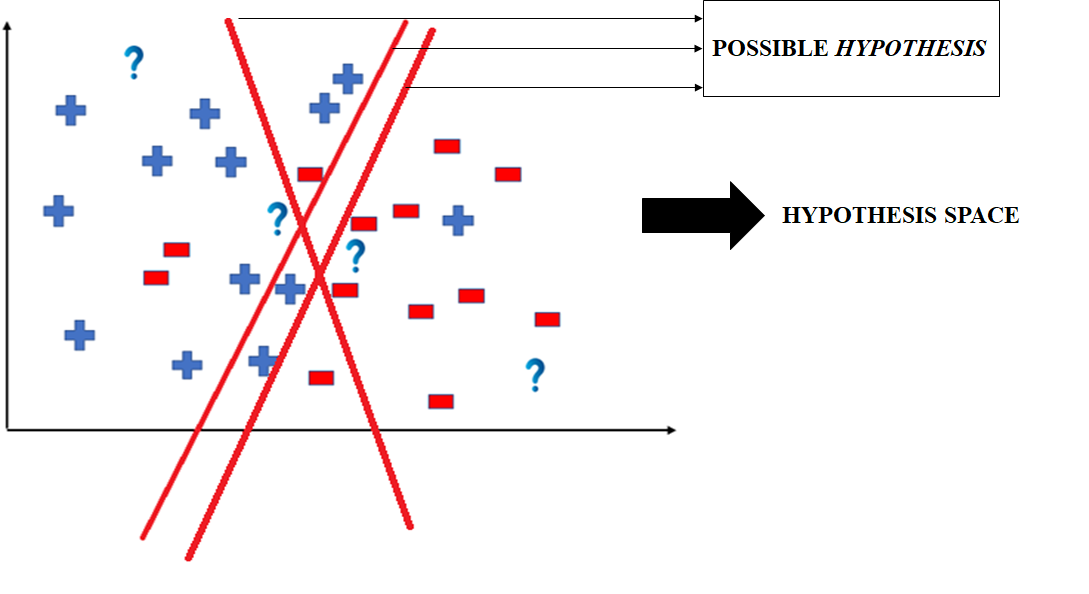

Hypothesis Space (H)

Hypothesis space is the set of all the possible legal hypothesis. This is the set from which the machine learning algorithm would determine the best possible (only one) which would best describe the target function or the outputs.

Hypothesis (h)

A hypothesis is a function that best describes the target in supervised machine learning. The hypothesis that an algorithm would come up depends upon the data and also depends upon the restrictions and bias that we have imposed on the data.

The Hypothesis can be calculated as:

- m = slope of the lines

- b = intercept

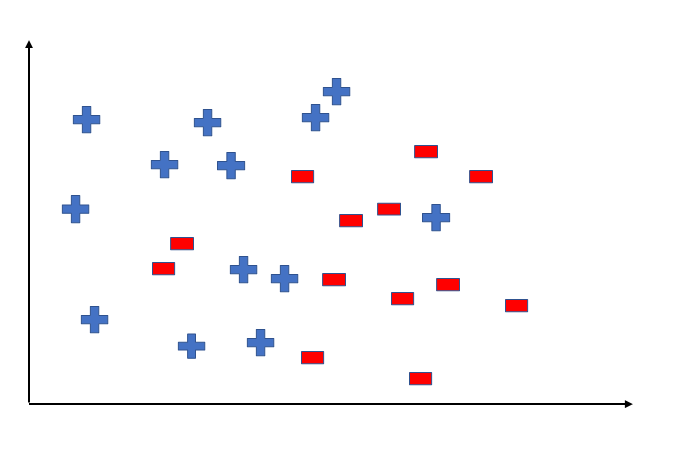

To better understand the Hypothesis Space and Hypothesis consider the following coordinate that shows the distribution of some data:

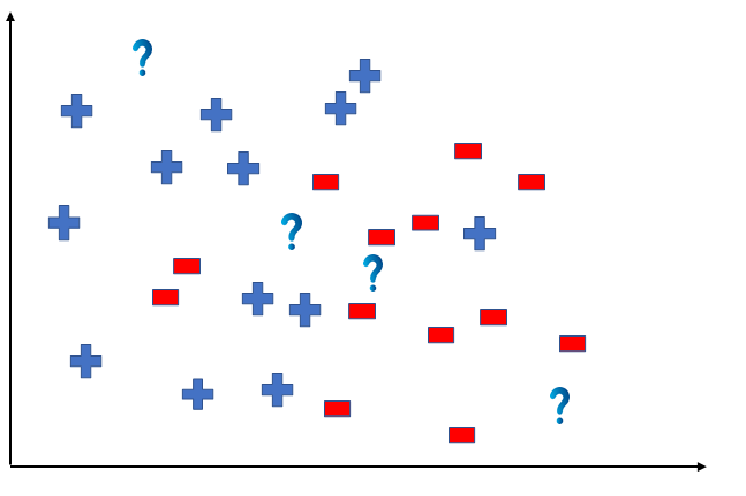

Say suppose we have test data for which we have to determine the outputs or results. The test data is as shown below:

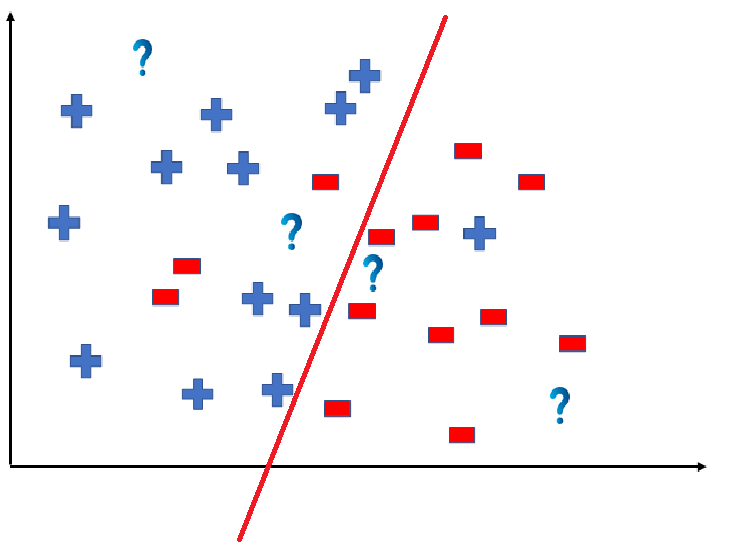

We can predict the outcomes by dividing the coordinate as shown below:

So the test data would yield the following result:

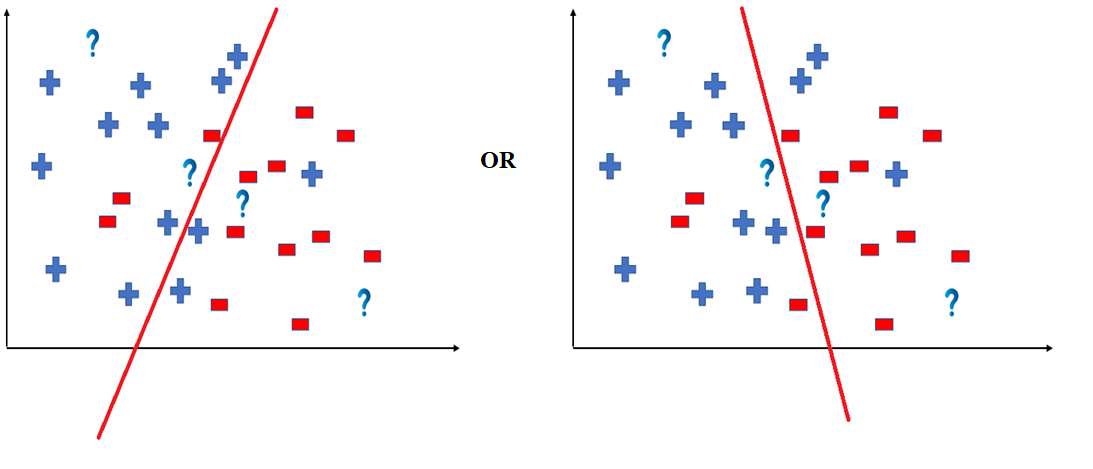

But note here that we could have divided the coordinate plane as:

The way in which the coordinate would be divided depends on the data, algorithm and constraints.

- All these legal possible ways in which we can divide the coordinate plane to predict the outcome of the test data composes of the Hypothesis Space.

- Each individual possible way is known as the hypothesis.

Hence, in this example the hypothesis space would be like:

Hypothesis in Statistics

In statistics , a hypothesis refers to a statement or assumption about a population parameter. It is a proposition or educated guess that helps guide statistical analyses. There are two types of hypotheses: the null hypothesis (H0) and the alternative hypothesis (H1 or Ha).

- Null Hypothesis(H 0 ): This hypothesis suggests that there is no significant difference or effect, and any observed results are due to chance. It often represents the status quo or a baseline assumption.

- Aternative Hypothesis(H 1 or H a ): This hypothesis contradicts the null hypothesis, proposing that there is a significant difference or effect in the population. It is what researchers aim to support with evidence.

Frequently Asked Questions (FAQs)

1. how does the training process use the hypothesis.

The learning algorithm uses the hypothesis as a guide to minimise the discrepancy between expected and actual outputs by adjusting its parameters during training.

2. How is the hypothesis’s accuracy assessed?

Usually, a cost function that calculates the difference between expected and actual values is used to assess accuracy. Optimising the model to reduce this expense is the aim.

3. What is Hypothesis testing?

Hypothesis testing is a statistical method for determining whether or not a hypothesis is correct. The hypothesis can be about two variables in a dataset, about an association between two groups, or about a situation.

4. What distinguishes the null hypothesis from the alternative hypothesis in machine learning experiments?

The null hypothesis (H0) assumes no significant effect, while the alternative hypothesis (H1 or Ha) contradicts H0, suggesting a meaningful impact. Statistical testing is employed to decide between these hypotheses.

Please Login to comment...

Similar reads.

- Machine Learning

- What are Tiktok AI Avatars?

- Poe Introduces A Price-per-message Revenue Model For AI Bot Creators

- Truecaller For Web Now Available For Android Users In India

- Google Introduces New AI-powered Vids App

- 30 OOPs Interview Questions and Answers (2024)

Improve your Coding Skills with Practice

What kind of Experience do you want to share?

Best Guesses: Understanding The Hypothesis in Machine Learning

- February 22, 2024

- General , Supervised Learning , Unsupervised Learning

Machine learning is a vast and complex field that has inherited many terms from other places all over the mathematical domain.

It can sometimes be challenging to get your head around all the different terminologies, never mind trying to understand how everything comes together.

In this blog post, we will focus on one particular concept: the hypothesis.

While you may think this is simple, there is a little caveat regarding machine learning.

The statistics side and the learning side.

Don’t worry; we’ll do a full breakdown below.

You’ll learn the following:

What Is a Hypothesis in Machine Learning?

- Is This any different than the hypothesis in statistics?

- What is the difference between the alternative hypothesis and the null?

- Why do we restrict hypothesis space in artificial intelligence?

- Example code performing hypothesis testing in machine learning

In machine learning, the term ‘hypothesis’ can refer to two things.

First, it can refer to the hypothesis space, the set of all possible training examples that could be used to predict or answer a new instance.

Second, it can refer to the traditional null and alternative hypotheses from statistics.

Since machine learning works so closely with statistics, 90% of the time, when someone is referencing the hypothesis, they’re referencing hypothesis tests from statistics.

Is This Any Different Than The Hypothesis In Statistics?

In statistics, the hypothesis is an assumption made about a population parameter.

The statistician’s goal is to prove it true or disprove it.

This will take the form of two different hypotheses, one called the null, and one called the alternative.

Usually, you’ll establish your null hypothesis as an assumption that it equals some value.

For example, in Welch’s T-Test Of Unequal Variance, our null hypothesis is that the two means we are testing (population parameter) are equal.

This means our null hypothesis is that the two population means are the same.

We run our statistical tests, and if our p-value is significant (very low), we reject the null hypothesis.

This would mean that their population means are unequal for the two samples you are testing.

Usually, statisticians will use the significance level of .05 (a 5% risk of being wrong) when deciding what to use as the p-value cut-off.

What Is The Difference Between The Alternative Hypothesis And The Null?

The null hypothesis is our default assumption, which we are trying to prove correct.

The alternate hypothesis is usually the opposite of our null and is much broader in scope.

For most statistical tests, the null and alternative hypotheses are already defined.

You are then just trying to find “significant” evidence we can use to reject our null hypothesis.

These two hypotheses are easy to spot by their specific notation. The null hypothesis is usually denoted by H₀, while H₁ denotes the alternative hypothesis.

Example Code Performing Hypothesis Testing In Machine Learning

Since there are many different hypothesis tests in machine learning and data science, we will focus on one of my favorites.

This test is Welch’s T-Test Of Unequal Variance, where we are trying to determine if the population means of these two samples are different.

There are a couple of assumptions for this test, but we will ignore those for now and show the code.

You can read more about this here in our other post, Welch’s T-Test of Unequal Variance .

We see that our p-value is very low, and we reject the null hypothesis.

What Is The Difference Between The Biased And Unbiased Hypothesis Spaces?

The difference between the Biased and Unbiased hypothesis space is the number of possible training examples your algorithm has to predict.

The unbiased space has all of them, and the biased space only has the training examples you’ve supplied.

Since neither of these is optimal (one is too small, one is much too big), your algorithm creates generalized rules (inductive learning) to be able to handle examples it hasn’t seen before.

Here’s an example of each:

Example of The Biased Hypothesis Space In Machine Learning

The Biased Hypothesis space in machine learning is a biased subspace where your algorithm does not consider all training examples to make predictions.

This is easiest to see with an example.

Let’s say you have the following data:

Happy and Sunny and Stomach Full = True

Whenever your algorithm sees those three together in the biased hypothesis space, it’ll automatically default to true.

This means when your algorithm sees:

Sad and Sunny And Stomach Full = False

It’ll automatically default to False since it didn’t appear in our subspace.

This is a greedy approach, but it has some practical applications.

Example of the Unbiased Hypothesis Space In Machine Learning

The unbiased hypothesis space is a space where all combinations are stored.

We can use re-use our example above:

This would start to breakdown as

Happy = True

Happy and Sunny = True

Happy and Stomach Full = True

Let’s say you have four options for each of the three choices.

This would mean our subspace would need 2^12 instances (4096) just for our little three-word problem.

This is practically impossible; the space would become huge.

So while it would be highly accurate, this has no scalability.

More reading on this idea can be found in our post, Inductive Bias In Machine Learning .

Why Do We Restrict Hypothesis Space In Artificial Intelligence?

We have to restrict the hypothesis space in machine learning. Without any restrictions, our domain becomes much too large, and we lose any form of scalability.

This is why our algorithm creates rules to handle examples that are seen in production.

This gives our algorithms a generalized approach that will be able to handle all new examples that are in the same format.

Other Quick Machine Learning Tutorials

At EML, we have a ton of cool data science tutorials that break things down so anyone can understand them.

Below we’ve listed a few that are similar to this guide:

- Instance-Based Learning in Machine Learning

- Types of Data For Machine Learning

- Verbose in Machine Learning

- Generalization In Machine Learning

- Epoch In Machine Learning

- Inductive Bias in Machine Learning

- Understanding The Hypothesis In Machine Learning

- Zip Codes In Machine Learning

- get_dummies() in Machine Learning

- Bootstrapping In Machine Learning

- X and Y in Machine Learning

- F1 Score in Machine Learning

- Recent Posts

- Innovative Techniques for Making Charts in Data Science [Must-See Design Hacks] - April 16, 2024

- PDF vs CDF in Data Science: Understanding Their Impact [Boost Your Data Analysis Skills] - April 15, 2024

- Mastering the Art of Combining Data in Data Science [Boost Your Analysis Skills] - April 15, 2024

Trending now

Hypothesis in Machine Learning: Comprehensive Overview(2021)

Introduction

Supervised machine learning (ML) is regularly portrayed as the issue of approximating an objective capacity that maps inputs to outputs. This portrayal is described as looking through and assessing competitor hypothesis from hypothesis spaces.

The conversation of hypothesis in machine learning can be confused for a novice, particularly when “hypothesis” has a discrete, but correlated significance in statistics and all the more comprehensively in science.

Hypothesis Space (H)

The hypothesis space utilized by an ML system is the arrangement of all hypotheses that may be returned by it. It is ordinarily characterized by a Hypothesis Language, conceivably related to a Language Bias.

Many ML algorithms depend on some sort of search methodology: given a set of perceptions and a space of all potential hypotheses that may be thought in the hypothesis space. They see in this space for those hypotheses that adequately furnish the data or are ideal concerning some other quality standard.

ML can be portrayed as the need to utilize accessible data objects to discover a function that most reliable maps inputs to output, alluded to as function estimate, where we surmised an anonymous objective function that can most reliably map inputs to outputs on all expected perceptions from the difficult domain. An illustration of a model that approximates the performs mappings and target function of inputs to outputs is known as hypothesis testing in machine learning.

The hypothesis in machine learning of all potential hypothesis that you are looking over, paying little mind to their structure. For the wellbeing of accommodation, the hypothesis class is normally compelled to be just each sort of function or model in turn, since learning techniques regularly just work on each type at a time. This doesn’t need to be the situation, however:

- Hypothesis classes don’t need to comprise just one kind of function. If you’re looking through exponential, quadratic, and overall linear functions, those are what your joined hypothesis class contains.

- Hypothesis classes additionally don’t need to comprise of just straightforward functions. If you figure out how to look over all piecewise-tanh2 functions, those functions are what your hypothesis class incorporates.

The enormous trade-off is that the bigger your hypothesis class in machine learning, the better the best hypothesis models the basic genuine function, yet the harder it is to locate that best hypothesis. This is identified with the bias-variance trade-off.

- Hypothesis (h)

A hypothesis function in machine learning is best describes the target. The hypothesis that an algorithm would concoct relies on the data and relies on the bias and restrictions that we have forced on the data.

The hypothesis formula in machine learning:

- y is range

- m changes in y divided by change in x

- x is domain

- b is intercept

The purpose of restricting hypothesis space in machine learning is so that these can fit well with the general data that is needed by the user. It checks the reality or deception of observations or inputs and examinations them appropriately. Subsequently, it is extremely helpful and it plays out the valuable function of mapping all the inputs till they come out as outputs. Consequently, the target functions are deliberately examined and restricted dependent on the outcomes (regardless of whether they are free of bias), in ML.

The hypothesis in machine learning space and inductive bias in machine learning is that the hypothesis space is a collection of valid Hypothesis, for example, every single desirable function, on the opposite side the inductive bias (otherwise called learning bias) of a learning algorithm is the series of expectations that the learner uses to foresee outputs of given sources of inputs that it has not experienced. Regression and Classification are a kind of realizing which relies upon continuous-valued and discrete-valued sequentially. This sort of issues (learnings) is called inductive learning issues since we distinguish a function by inducting it on data.

In the Maximum a Posteriori or MAP hypothesis in machine learning, enhancement gives a Bayesian probability structure to fitting model parameters to training data and another option and sibling may be a more normal Maximum Likelihood Estimation system. MAP learning chooses a solitary in all probability theory given the data. The hypothesis in machine learning earlier is as yet utilized and the technique is regularly more manageable than full Bayesian learning.

Bayesian techniques can be utilized to decide the most plausible hypothesis in machine learning given the data the MAP hypothesis. This is the ideal hypothesis as no other hypothesis is more probable.

Hypothesis in machine learning or ML the applicant model that approximates a target function for mapping instances of inputs to outputs.

Hypothesis in statistics probabilistic clarification about the presence of a connection between observations.

Hypothesis in science is a temporary clarification that fits the proof and can be disproved or confirmed. We can see that a hypothesis in machine learning draws upon the meaning of the hypothesis all the more extensively in science.

There are no right or wrong ways of learning AI and ML technologies – the more, the better! These valuable resources can be the starting point for your journey on how to learn Artificial Intelligence and Machine Learning. Do pursuing AI and ML interest you? If you want to step into the world of emerging tech, you can accelerate your career with this Machine Learning And AI Courses by Jigsaw Academy.

- XGBoost Algorithm: An Easy Overview For 2021

Fill in the details to know more

PEOPLE ALSO READ

Related Articles

From The Eyes Of Emerging Technologies: IPL Through The Ages

April 29, 2023

Personalized Teaching with AI: Revolutionizing Traditional Teaching Methods

April 28, 2023

Metaverse: The Virtual Universe and its impact on the World of Finance

April 13, 2023

Artificial Intelligence – Learning To Manage The Mind Created By The Human Mind!

March 22, 2023

Wake Up to the Importance of Sleep: Celebrating World Sleep Day!

March 18, 2023

Operations Management and AI: How Do They Work?

March 15, 2023

How Does BYOP(Bring Your Own Project) Help In Building Your Portfolio?

What Are the Ethics in Artificial Intelligence (AI)?

November 25, 2022

What is Epoch in Machine Learning?| UNext

November 24, 2022

The Impact Of Artificial Intelligence (AI) in Cloud Computing

November 18, 2022

Role of Artificial Intelligence and Machine Learning in Supply Chain Management

November 11, 2022

Best Python Libraries for Machine Learning in 2022

November 7, 2022

Are you ready to build your own career?

Query? Ask Us

Enter Your Details ×

- Comprehensive Learning Paths

- 150+ Hours of Videos

- Complete Access to Jupyter notebooks, Datasets, References.

Hypothesis Testing – A Deep Dive into Hypothesis Testing, The Backbone of Statistical Inference

- September 21, 2023

Explore the intricacies of hypothesis testing, a cornerstone of statistical analysis. Dive into methods, interpretations, and applications for making data-driven decisions.

In this Blog post we will learn:

- What is Hypothesis Testing?

- Steps in Hypothesis Testing 2.1. Set up Hypotheses: Null and Alternative 2.2. Choose a Significance Level (α) 2.3. Calculate a test statistic and P-Value 2.4. Make a Decision

- Example : Testing a new drug.

- Example in python

1. What is Hypothesis Testing?

In simple terms, hypothesis testing is a method used to make decisions or inferences about population parameters based on sample data. Imagine being handed a dice and asked if it’s biased. By rolling it a few times and analyzing the outcomes, you’d be engaging in the essence of hypothesis testing.

Think of hypothesis testing as the scientific method of the statistics world. Suppose you hear claims like “This new drug works wonders!” or “Our new website design boosts sales.” How do you know if these statements hold water? Enter hypothesis testing.

2. Steps in Hypothesis Testing

- Set up Hypotheses : Begin with a null hypothesis (H0) and an alternative hypothesis (Ha).

- Choose a Significance Level (α) : Typically 0.05, this is the probability of rejecting the null hypothesis when it’s actually true. Think of it as the chance of accusing an innocent person.

- Calculate Test statistic and P-Value : Gather evidence (data) and calculate a test statistic.

- p-value : This is the probability of observing the data, given that the null hypothesis is true. A small p-value (typically ≤ 0.05) suggests the data is inconsistent with the null hypothesis.

- Decision Rule : If the p-value is less than or equal to α, you reject the null hypothesis in favor of the alternative.

2.1. Set up Hypotheses: Null and Alternative

Before diving into testing, we must formulate hypotheses. The null hypothesis (H0) represents the default assumption, while the alternative hypothesis (H1) challenges it.

For instance, in drug testing, H0 : “The new drug is no better than the existing one,” H1 : “The new drug is superior .”

2.2. Choose a Significance Level (α)

When You collect and analyze data to test H0 and H1 hypotheses. Based on your analysis, you decide whether to reject the null hypothesis in favor of the alternative, or fail to reject / Accept the null hypothesis.

The significance level, often denoted by $α$, represents the probability of rejecting the null hypothesis when it is actually true.

In other words, it’s the risk you’re willing to take of making a Type I error (false positive).

Type I Error (False Positive) :

- Symbolized by the Greek letter alpha (α).

- Occurs when you incorrectly reject a true null hypothesis . In other words, you conclude that there is an effect or difference when, in reality, there isn’t.

- The probability of making a Type I error is denoted by the significance level of a test. Commonly, tests are conducted at the 0.05 significance level , which means there’s a 5% chance of making a Type I error .

- Commonly used significance levels are 0.01, 0.05, and 0.10, but the choice depends on the context of the study and the level of risk one is willing to accept.

Example : If a drug is not effective (truth), but a clinical trial incorrectly concludes that it is effective (based on the sample data), then a Type I error has occurred.

Type II Error (False Negative) :

- Symbolized by the Greek letter beta (β).

- Occurs when you accept a false null hypothesis . This means you conclude there is no effect or difference when, in reality, there is.

- The probability of making a Type II error is denoted by β. The power of a test (1 – β) represents the probability of correctly rejecting a false null hypothesis.

Example : If a drug is effective (truth), but a clinical trial incorrectly concludes that it is not effective (based on the sample data), then a Type II error has occurred.

Balancing the Errors :

In practice, there’s a trade-off between Type I and Type II errors. Reducing the risk of one typically increases the risk of the other. For example, if you want to decrease the probability of a Type I error (by setting a lower significance level), you might increase the probability of a Type II error unless you compensate by collecting more data or making other adjustments.

It’s essential to understand the consequences of both types of errors in any given context. In some situations, a Type I error might be more severe, while in others, a Type II error might be of greater concern. This understanding guides researchers in designing their experiments and choosing appropriate significance levels.

2.3. Calculate a test statistic and P-Value

Test statistic : A test statistic is a single number that helps us understand how far our sample data is from what we’d expect under a null hypothesis (a basic assumption we’re trying to test against). Generally, the larger the test statistic, the more evidence we have against our null hypothesis. It helps us decide whether the differences we observe in our data are due to random chance or if there’s an actual effect.

P-value : The P-value tells us how likely we would get our observed results (or something more extreme) if the null hypothesis were true. It’s a value between 0 and 1. – A smaller P-value (typically below 0.05) means that the observation is rare under the null hypothesis, so we might reject the null hypothesis. – A larger P-value suggests that what we observed could easily happen by random chance, so we might not reject the null hypothesis.

2.4. Make a Decision

Relationship between $α$ and P-Value

When conducting a hypothesis test:

We then calculate the p-value from our sample data and the test statistic.

Finally, we compare the p-value to our chosen $α$:

- If $p−value≤α$: We reject the null hypothesis in favor of the alternative hypothesis. The result is said to be statistically significant.

- If $p−value>α$: We fail to reject the null hypothesis. There isn’t enough statistical evidence to support the alternative hypothesis.

3. Example : Testing a new drug.

Imagine we are investigating whether a new drug is effective at treating headaches faster than drug B.

Setting Up the Experiment : You gather 100 people who suffer from headaches. Half of them (50 people) are given the new drug (let’s call this the ‘Drug Group’), and the other half are given a sugar pill, which doesn’t contain any medication.

- Set up Hypotheses : Before starting, you make a prediction:

- Null Hypothesis (H0): The new drug has no effect. Any difference in healing time between the two groups is just due to random chance.

- Alternative Hypothesis (H1): The new drug does have an effect. The difference in healing time between the two groups is significant and not just by chance.

Calculate Test statistic and P-Value : After the experiment, you analyze the data. The “test statistic” is a number that helps you understand the difference between the two groups in terms of standard units.

For instance, let’s say:

- The average healing time in the Drug Group is 2 hours.

- The average healing time in the Placebo Group is 3 hours.

The test statistic helps you understand how significant this 1-hour difference is. If the groups are large and the spread of healing times in each group is small, then this difference might be significant. But if there’s a huge variation in healing times, the 1-hour difference might not be so special.

Imagine the P-value as answering this question: “If the new drug had NO real effect, what’s the probability that I’d see a difference as extreme (or more extreme) as the one I found, just by random chance?”

For instance:

- P-value of 0.01 means there’s a 1% chance that the observed difference (or a more extreme difference) would occur if the drug had no effect. That’s pretty rare, so we might consider the drug effective.

- P-value of 0.5 means there’s a 50% chance you’d see this difference just by chance. That’s pretty high, so we might not be convinced the drug is doing much.

- If the P-value is less than ($α$) 0.05: the results are “statistically significant,” and they might reject the null hypothesis , believing the new drug has an effect.

- If the P-value is greater than ($α$) 0.05: the results are not statistically significant, and they don’t reject the null hypothesis , remaining unsure if the drug has a genuine effect.

4. Example in python

For simplicity, let’s say we’re using a t-test (common for comparing means). Let’s dive into Python:

Making a Decision : “The results are statistically significant! p-value < 0.05 , The drug seems to have an effect!” If not, we’d say, “Looks like the drug isn’t as miraculous as we thought.”

5. Conclusion

Hypothesis testing is an indispensable tool in data science, allowing us to make data-driven decisions with confidence. By understanding its principles, conducting tests properly, and considering real-world applications, you can harness the power of hypothesis testing to unlock valuable insights from your data.

More Articles

Correlation – connecting the dots, the role of correlation in data analysis, sampling and sampling distributions – a comprehensive guide on sampling and sampling distributions, law of large numbers – a deep dive into the world of statistics, central limit theorem – a deep dive into central limit theorem and its significance in statistics, skewness and kurtosis – peaks and tails, understanding data through skewness and kurtosis”, similar articles, complete introduction to linear regression in r, how to implement common statistical significance tests and find the p value, logistic regression – a complete tutorial with examples in r.

Subscribe to Machine Learning Plus for high value data science content

© Machinelearningplus. All rights reserved.

Machine Learning A-Z™: Hands-On Python & R In Data Science

Free sample videos:.

Machine Learning

Artificial Intelligence

Control System

Supervised Learning

Classification, miscellaneous, related tutorials.

Interview Questions

- Send your Feedback to [email protected]

Help Others, Please Share

Learn Latest Tutorials

Transact-SQL

Reinforcement Learning

R Programming

React Native

Python Design Patterns

Python Pillow

Python Turtle

Preparation

Verbal Ability

Company Questions

Trending Technologies

Cloud Computing

Data Science

B.Tech / MCA

Data Structures

Operating System

Computer Network

Compiler Design

Computer Organization

Discrete Mathematics

Ethical Hacking

Computer Graphics

Software Engineering

Web Technology

Cyber Security

C Programming

Data Mining

Data Warehouse

What is Hypothesis in Machine Learning? How to Form a Hypothesis?

Hypothesis Testing is a broad subject that is applicable to many fields. When we study statistics, the Hypothesis Testing there involves data from multiple populations and the test is to see how significant the effect is on the population.

Top Machine Learning and AI Courses Online

This involves calculating the p-value and comparing it with the critical value or the alpha. When it comes to Machine Learning, Hypothesis Testing deals with finding the function that best approximates independent features to the target. In other words, map the inputs to the outputs.

By the end of this tutorial, you will know the following:

- What is Hypothesis in Statistics vs Machine Learning

- What is Hypothesis space?

Process of Forming a Hypothesis

Trending machine learning skills, hypothesis in statistics.

A Hypothesis is an assumption of a result that is falsifiable, meaning it can be proven wrong by some evidence. A Hypothesis can be either rejected or failed to be rejected. We never accept any hypothesis in statistics because it is all about probabilities and we are never 100% certain. Before the start of the experiment, we define two hypotheses:

1. Null Hypothesis: says that there is no significant effect

2. Alternative Hypothesis: says that there is some significant effect

In statistics, we compare the P-value (which is calculated using different types of statistical tests) with the critical value or alpha. The larger the P-value, the higher is the likelihood, which in turn signifies that the effect is not significant and we conclude that we fail to reject the null hypothesis .

In other words, the effect is highly likely to have occurred by chance and there is no statistical significance of it. On the other hand, if we get a P-value very small, it means that the likelihood is small. That means the probability of the event occurring by chance is very low.

Join the ML and AI Course online from the World’s top Universities – Masters, Executive Post Graduate Programs, and Advanced Certificate Program in ML & AI to fast-track your career.

Significance Level

The Significance Level is set before starting the experiment. This defines how much is the tolerance of error and at which level can the effect can be considered significant. A common value for significance level is 95% which also means that there is a 5% chance of us getting fooled by the test and making an error. In other words, the critical value is 0.05 which acts as a threshold. Similarly, if the significance level was set at 99%, it would mean a critical value of 0.01%.

A statistical test is carried out on the population and sample to find out the P-value which then is compared with the critical value. If the P-value comes out to be less than the critical value, then we can conclude that the effect is significant and hence reject the Null Hypothesis (that said there is no significant effect). If P-Value comes out to be more than the critical value, we can conclude that there is no significant effect and hence fail to reject the Null Hypothesis.

Now, as we can never be 100% sure, there is always a chance of our tests being correct but the results being misleading. This means that either we reject the null when it is actually not wrong. It can also mean that we don’t reject the null when it is actually false. These are type 1 and type 2 errors of Hypothesis Testing.

Example

Consider you’re working for a vaccine manufacturer and your team develops the vaccine for Covid-19. To prove the efficacy of this vaccine, it needs to statistically proven that it is effective on humans. Therefore, we take two groups of people of equal size and properties. We give the vaccine to group A and we give a placebo to group B. We carry out analysis to see how many people in group A got infected and how many in group B got infected.

We test this multiple times to see if group A developed any significant immunity against Covid-19 or not. We calculate the P-value for all these tests and conclude that P-values are always less than the critical value. Hence, we can safely reject the null hypothesis and conclude there is indeed a significant effect.

Read: Machine Learning Models Explained

Hypothesis in Machine Learning

Hypothesis in Machine Learning is used when in a Supervised Machine Learning, we need to find the function that best maps input to output. This can also be called function approximation because we are approximating a target function that best maps feature to the target.

1. Hypothesis(h): A Hypothesis can be a single model that maps features to the target, however, may be the result/metrics. A hypothesis is signified by “ h ”.

2. Hypothesis Space(H): A Hypothesis space is a complete range of models and their possible parameters that can be used to model the data. It is signified by “ H ”. In other words, the Hypothesis is a subset of Hypothesis Space.

In essence, we have the training data (independent features and the target) and a target function that maps features to the target. These are then run on different types of algorithms using different types of configuration of their hyperparameter space to check which configuration produces the best results. The training data is used to formulate and find the best hypothesis from the hypothesis space. The test data is used to validate or verify the results produced by the hypothesis.

Consider an example where we have a dataset of 10000 instances with 10 features and one target. The target is binary, which means it is a binary classification problem. Now, say, we model this data using Logistic Regression and get an accuracy of 78%. We can draw the regression line which separates both the classes. This is a Hypothesis(h). Then we test this hypothesis on test data and get a score of 74%.

Checkout: Machine Learning Projects & Topics

Now, again assume we fit a RandomForests model on the same data and get an accuracy score of 85%. This is a good improvement over Logistic Regression already. Now we decide to tune the hyperparameters of RandomForests to get a better score on the same data. We do a grid search and run multiple RandomForest models on the data and check their performance. In this step, we are essentially searching the Hypothesis Space(H) to find a better function. After completing the grid search, we get the best score of 89% and we end the search.

FYI: Free nlp course !

Now we also try more models like XGBoost, Support Vector Machine and Naive Bayes theorem to test their performances on the same data. We then pick the best performing model and test it on the test data to validate its performance and get a score of 87%.

Popular AI and ML Blogs & Free Courses

Before you go.

The hypothesis is a crucial aspect of Machine Learning and Data Science. It is present in all the domains of analytics and is the deciding factor of whether a change should be introduced or not. Be it pharma, software, sales, etc. A Hypothesis covers the complete training dataset to check the performance of the models from the Hypothesis space.

A Hypothesis must be falsifiable, which means that it must be possible to test and prove it wrong if the results go against it. The process of searching for the best configuration of the model is time-consuming when a lot of different configurations need to be verified. There are ways to speed up this process as well by using techniques like Random Search of hyperparameters.

If you’re interested to learn more about machine learning, check out IIIT-B & upGrad’s Executive PG Programme in Machine Learning & AI which is designed for working professionals and offers 450+ hours of rigorous training, 30+ case studies & assignments, IIIT-B Alumni status, 5+ practical hands-on capstone projects & job assistance with top firms.

Pavan Vadapalli

Something went wrong

Our Trending Machine Learning Courses

- Advanced Certificate Programme in Machine Learning and NLP from IIIT Bangalore - Duration 8 Months

- Master of Science in Machine Learning & AI from LJMU - Duration 18 Months

- Executive PG Program in Machine Learning and AI from IIIT-B - Duration 12 Months

Machine Learning Skills To Master

- Artificial Intelligence Courses

- Tableau Courses

- NLP Courses

- Deep Learning Courses

Our Popular Machine Learning Course

Frequently Asked Questions (FAQs)

There are many reasons to do open-source projects. You are learning new things, you are helping others, you are networking with others, you are creating a reputation and many more. Open source is fun, and eventually you will get something back. One of the most important reasons is that it builds a portfolio of great work that you can present to companies and get hired. Open-source projects are a wonderful way to learn new things. You could be enhancing your knowledge of software development or you could be learning a new skill. There is no better way to learn than to teach.

Yes. Open-source projects do not discriminate. The open-source communities are made of people who love to write code. There is always a place for a newbie. You will learn a lot and also have the chance to participate in a variety of open-source projects. You will learn what works and what doesn't and you will also have the chance to make your code used by a large community of developers. There is a list of open-source projects that are always looking for new contributors.

GitHub offers developers a way to manage projects and collaborate with each other. It also serves as a sort of resume for developers, with a project's contributors, documentation, and releases listed. Contributions to a project show potential employers that you have the skills and motivation to work in a team. Projects are often more than code, so GitHub has a way that you can structure your project just like you would structure a website. You can manage your website with a branch. A branch is like an experiment or a copy of your website. When you want to experiment with a new feature or fix something, you make a branch and experiment there. If the experiment is successful, you can merge the branch back into the original website.

Explore Free Courses

Learn more about the education system, top universities, entrance tests, course information, and employment opportunities in Canada through this course.

Advance your career in the field of marketing with Industry relevant free courses

Build your foundation in one of the hottest industry of the 21st century

Master industry-relevant skills that are required to become a leader and drive organizational success

Build essential technical skills to move forward in your career in these evolving times

Get insights from industry leaders and career counselors and learn how to stay ahead in your career

Kickstart your career in law by building a solid foundation with these relevant free courses.

Stay ahead of the curve and upskill yourself on Generative AI and ChatGPT

Build your confidence by learning essential soft skills to help you become an Industry ready professional.

Learn more about the education system, top universities, entrance tests, course information, and employment opportunities in USA through this course.

Suggested Blogs

by venkatesh Rajanala

29 Feb 2024

by Pavan Vadapalli

27 Feb 2024

19 Feb 2024

by Kechit Goyal

18 Feb 2024

![what is hypothesis class in machine learning Artificial Intelligence Salary in India [For Beginners & Experienced] in 2024](https://www.upgrad.com/__khugblog-next/image/?url=https%3A%2F%2Fd14b9ctw0m6fid.cloudfront.net%2Fugblog%2Fwp-content%2Fuploads%2F2019%2F11%2F06-banner.png&w=3840&q=75)

17 Feb 2024

![what is hypothesis class in machine learning 45+ Interesting Machine Learning Project Ideas For Beginners [2024]](https://www.upgrad.com/__khugblog-next/image/?url=https%3A%2F%2Fd14b9ctw0m6fid.cloudfront.net%2Fugblog%2Fwp-content%2Fuploads%2F2019%2F07%2FBlog_FI_Machine_Learning_Project_Ideas.png&w=3840&q=75)

by Jaideep Khare

16 Feb 2024

- Selected Reading

- UPSC IAS Exams Notes

- Developer's Best Practices

- Questions and Answers

- Effective Resume Writing

- HR Interview Questions

- Computer Glossary

What is hypothesis in Machine Learning?

The hypothesis is a word that is frequently used in Machine Learning and data science initiatives. As we all know, machine learning is one of the most powerful technologies in the world, allowing us to anticipate outcomes based on previous experiences. Moreover, data scientists and ML specialists undertake experiments with the goal of solving an issue. These ML experts and data scientists make an initial guess on how to solve the challenge.

What is a Hypothesis?

A hypothesis is a conjecture or proposed explanation that is based on insufficient facts or assumptions. It is only a conjecture based on certain known facts that have yet to be confirmed. A good hypothesis is tested and yields either true or erroneous outcomes.

Let's look at an example to better grasp the hypothesis. According to some scientists, ultraviolet (UV) light can harm the eyes and induce blindness.

In this case, a scientist just states that UV rays are hazardous to the eyes, but people presume they can lead to blindness. Yet, it is conceivable that it will not be achievable. As a result, these kinds of assumptions are referred to as hypotheses.

Defining Hypothesis in Machine Learning

In machine learning, a hypothesis is a mathematical function or model that converts input data into output predictions. The model's first belief or explanation is based on the facts supplied. The hypothesis is typically expressed as a collection of parameters characterizing the behavior of the model.

If we're building a model to predict the price of a property based on its size and location. The hypothesis function may look something like this −

$$\mathrm{h(x)\:=\:θ0\:+\:θ1\:*\:x1\:+\:θ2\:*\:x2}$$

The hypothesis function is h(x), its input data is x, the model's parameters are 0, 1, and 2, and the features are x1 and x2.

The machine learning model's purpose is to discover the optimal values for parameters 0 through 2 that minimize the difference between projected and actual output labels.

To put it another way, we're looking for the hypothesis function that best represents the underlying link between the input and output data.

Types of Hypotheses in Machine Learning

The next step is to build a hypothesis after identifying the problem and obtaining evidence. A hypothesis is an explanation or solution to a problem based on insufficient data. It acts as a springboard for further investigation and experimentation. A hypothesis is a machine learning function that converts inputs to outputs based on some assumptions. A good hypothesis contributes to the creation of an accurate and efficient machine-learning model. Several machine learning theories are as follows −

1. Null Hypothesis

A null hypothesis is a basic hypothesis that states that no link exists between the independent and dependent variables. In other words, it assumes the independent variable has no influence on the dependent variable. It is symbolized by the symbol H0. If the p-value falls outside the significance level, the null hypothesis is typically rejected (). If the null hypothesis is correct, the coefficient of determination is the probability of rejecting it. A null hypothesis is involved in test findings such as t-tests and ANOVA.

2. Alternative Hypothesis

An alternative hypothesis is a hypothesis that contradicts the null hypothesis. It assumes that there is a relationship between the independent and dependent variables. In other words, it assumes that there is an effect of the independent variable on the dependent variable. It is denoted by Ha. An alternative hypothesis is generally accepted if the p-value is less than the significance level (α). An alternative hypothesis is also known as a research hypothesis.

3. One-tailed Hypothesis

A one-tailed test is a type of significance test in which the region of rejection is located at one end of the sample distribution. It denotes that the estimated test parameter is more or less than the crucial value, implying that the alternative hypothesis rather than the null hypothesis should be accepted. It is most commonly used in the chi-square distribution, where all of the crucial areas, related to, are put in either of the two tails. Left-tailed or right-tailed one-tailed tests are both possible.

4. Two-tailed Hypothesis

The two-tailed test is a hypothesis test in which the region of rejection or critical area is on both ends of the normal distribution. It determines whether the sample tested falls within or outside a certain range of values, and an alternative hypothesis is accepted if the calculated value falls in either of the two tails of the probability distribution. α is bifurcated into two equal parts, and the estimated parameter is either above or below the assumed parameter, so extreme values work as evidence against the null hypothesis.

Overall, the hypothesis plays a critical role in the machine learning model. It provides a starting point for the model to make predictions and helps to guide the learning process. The accuracy of the hypothesis is evaluated using various metrics like mean squared error or accuracy.

The hypothesis is a mathematical function or model that converts input data into output predictions, typically expressed as a collection of parameters characterizing the behavior of the model. It is an explanation or solution to a problem based on insufficient data. A good hypothesis contributes to the creation of an accurate and efficient machine-learning model. A two-tailed hypothesis is used when there is no prior knowledge or theoretical basis to infer a certain direction of the link.

Related Articles

- What is Machine Learning?

- What is Epoch in Machine Learning?

- What is momentum in Machine Learning?

- What is Standardization in Machine Learning

- What is Q-learning with respect to reinforcement learning in Machine Learning?

- What is Bayes Theorem in Machine Learning

- What is field Mapping in Machine Learning?

- What is Parameter Extraction in Machine Learning

- What is Grouped Convolution in Machine Learning?

- What is Tpot AutoML in machine learning?

- What is Projection Perspective in Machine Learning?

- What is a Neural Network in Machine Learning?

- What is corporate fraud detection in machine learning?

- What is Linear Algebra Application in Machine Learning

- What is Continuous Kernel Convolution in machine learning?

Kickstart Your Career

Get certified by completing the course

- Search Menu

- Browse content in A - General Economics and Teaching

- Browse content in A1 - General Economics

- A11 - Role of Economics; Role of Economists; Market for Economists

- Browse content in B - History of Economic Thought, Methodology, and Heterodox Approaches

- Browse content in B4 - Economic Methodology

- B49 - Other

- Browse content in C - Mathematical and Quantitative Methods

- Browse content in C0 - General

- C00 - General

- C01 - Econometrics

- Browse content in C1 - Econometric and Statistical Methods and Methodology: General

- C10 - General

- C11 - Bayesian Analysis: General

- C12 - Hypothesis Testing: General

- C13 - Estimation: General

- C14 - Semiparametric and Nonparametric Methods: General

- C18 - Methodological Issues: General

- Browse content in C2 - Single Equation Models; Single Variables

- C21 - Cross-Sectional Models; Spatial Models; Treatment Effect Models; Quantile Regressions

- C23 - Panel Data Models; Spatio-temporal Models

- C26 - Instrumental Variables (IV) Estimation

- Browse content in C3 - Multiple or Simultaneous Equation Models; Multiple Variables

- C30 - General

- C31 - Cross-Sectional Models; Spatial Models; Treatment Effect Models; Quantile Regressions; Social Interaction Models

- C32 - Time-Series Models; Dynamic Quantile Regressions; Dynamic Treatment Effect Models; Diffusion Processes; State Space Models

- C35 - Discrete Regression and Qualitative Choice Models; Discrete Regressors; Proportions

- Browse content in C4 - Econometric and Statistical Methods: Special Topics

- C40 - General

- Browse content in C5 - Econometric Modeling

- C52 - Model Evaluation, Validation, and Selection

- C53 - Forecasting and Prediction Methods; Simulation Methods

- C55 - Large Data Sets: Modeling and Analysis

- Browse content in C6 - Mathematical Methods; Programming Models; Mathematical and Simulation Modeling

- C63 - Computational Techniques; Simulation Modeling

- C67 - Input-Output Models

- Browse content in C7 - Game Theory and Bargaining Theory

- C71 - Cooperative Games

- C72 - Noncooperative Games

- C73 - Stochastic and Dynamic Games; Evolutionary Games; Repeated Games

- C78 - Bargaining Theory; Matching Theory

- C79 - Other

- Browse content in C8 - Data Collection and Data Estimation Methodology; Computer Programs

- C83 - Survey Methods; Sampling Methods

- Browse content in C9 - Design of Experiments

- C90 - General

- C91 - Laboratory, Individual Behavior

- C92 - Laboratory, Group Behavior

- C93 - Field Experiments

- C99 - Other

- Browse content in D - Microeconomics

- Browse content in D0 - General

- D00 - General

- D01 - Microeconomic Behavior: Underlying Principles

- D02 - Institutions: Design, Formation, Operations, and Impact

- D03 - Behavioral Microeconomics: Underlying Principles

- D04 - Microeconomic Policy: Formulation; Implementation, and Evaluation

- Browse content in D1 - Household Behavior and Family Economics

- D10 - General

- D11 - Consumer Economics: Theory

- D12 - Consumer Economics: Empirical Analysis

- D13 - Household Production and Intrahousehold Allocation

- D14 - Household Saving; Personal Finance

- D15 - Intertemporal Household Choice: Life Cycle Models and Saving

- D18 - Consumer Protection

- Browse content in D2 - Production and Organizations

- D20 - General

- D21 - Firm Behavior: Theory

- D22 - Firm Behavior: Empirical Analysis

- D23 - Organizational Behavior; Transaction Costs; Property Rights

- D24 - Production; Cost; Capital; Capital, Total Factor, and Multifactor Productivity; Capacity

- Browse content in D3 - Distribution

- D30 - General

- D31 - Personal Income, Wealth, and Their Distributions

- D33 - Factor Income Distribution

- Browse content in D4 - Market Structure, Pricing, and Design

- D40 - General

- D41 - Perfect Competition

- D42 - Monopoly

- D43 - Oligopoly and Other Forms of Market Imperfection

- D44 - Auctions

- D47 - Market Design

- D49 - Other

- Browse content in D5 - General Equilibrium and Disequilibrium

- D50 - General

- D51 - Exchange and Production Economies

- D52 - Incomplete Markets

- D53 - Financial Markets

- D57 - Input-Output Tables and Analysis

- Browse content in D6 - Welfare Economics

- D60 - General

- D61 - Allocative Efficiency; Cost-Benefit Analysis

- D62 - Externalities

- D63 - Equity, Justice, Inequality, and Other Normative Criteria and Measurement

- D64 - Altruism; Philanthropy

- D69 - Other

- Browse content in D7 - Analysis of Collective Decision-Making

- D70 - General

- D71 - Social Choice; Clubs; Committees; Associations

- D72 - Political Processes: Rent-seeking, Lobbying, Elections, Legislatures, and Voting Behavior

- D73 - Bureaucracy; Administrative Processes in Public Organizations; Corruption

- D74 - Conflict; Conflict Resolution; Alliances; Revolutions

- D78 - Positive Analysis of Policy Formulation and Implementation

- Browse content in D8 - Information, Knowledge, and Uncertainty

- D80 - General

- D81 - Criteria for Decision-Making under Risk and Uncertainty

- D82 - Asymmetric and Private Information; Mechanism Design

- D83 - Search; Learning; Information and Knowledge; Communication; Belief; Unawareness

- D84 - Expectations; Speculations

- D85 - Network Formation and Analysis: Theory

- D86 - Economics of Contract: Theory

- D89 - Other

- Browse content in D9 - Micro-Based Behavioral Economics

- D90 - General

- D91 - Role and Effects of Psychological, Emotional, Social, and Cognitive Factors on Decision Making

- D92 - Intertemporal Firm Choice, Investment, Capacity, and Financing

- Browse content in E - Macroeconomics and Monetary Economics

- Browse content in E0 - General

- E00 - General

- E01 - Measurement and Data on National Income and Product Accounts and Wealth; Environmental Accounts

- E02 - Institutions and the Macroeconomy

- E03 - Behavioral Macroeconomics

- Browse content in E1 - General Aggregative Models

- E10 - General

- E12 - Keynes; Keynesian; Post-Keynesian

- E13 - Neoclassical

- Browse content in E2 - Consumption, Saving, Production, Investment, Labor Markets, and Informal Economy

- E20 - General

- E21 - Consumption; Saving; Wealth

- E22 - Investment; Capital; Intangible Capital; Capacity

- E23 - Production

- E24 - Employment; Unemployment; Wages; Intergenerational Income Distribution; Aggregate Human Capital; Aggregate Labor Productivity

- E25 - Aggregate Factor Income Distribution

- Browse content in E3 - Prices, Business Fluctuations, and Cycles

- E30 - General

- E31 - Price Level; Inflation; Deflation

- E32 - Business Fluctuations; Cycles

- E37 - Forecasting and Simulation: Models and Applications

- Browse content in E4 - Money and Interest Rates

- E40 - General

- E41 - Demand for Money

- E42 - Monetary Systems; Standards; Regimes; Government and the Monetary System; Payment Systems

- E43 - Interest Rates: Determination, Term Structure, and Effects

- E44 - Financial Markets and the Macroeconomy

- Browse content in E5 - Monetary Policy, Central Banking, and the Supply of Money and Credit

- E50 - General

- E51 - Money Supply; Credit; Money Multipliers

- E52 - Monetary Policy

- E58 - Central Banks and Their Policies

- Browse content in E6 - Macroeconomic Policy, Macroeconomic Aspects of Public Finance, and General Outlook

- E60 - General

- E62 - Fiscal Policy

- E66 - General Outlook and Conditions

- Browse content in E7 - Macro-Based Behavioral Economics

- E71 - Role and Effects of Psychological, Emotional, Social, and Cognitive Factors on the Macro Economy

- Browse content in F - International Economics

- Browse content in F0 - General

- F00 - General

- Browse content in F1 - Trade

- F10 - General

- F11 - Neoclassical Models of Trade

- F12 - Models of Trade with Imperfect Competition and Scale Economies; Fragmentation

- F13 - Trade Policy; International Trade Organizations

- F14 - Empirical Studies of Trade

- F15 - Economic Integration

- F16 - Trade and Labor Market Interactions

- F18 - Trade and Environment

- Browse content in F2 - International Factor Movements and International Business

- F20 - General

- F21 - International Investment; Long-Term Capital Movements

- F22 - International Migration

- F23 - Multinational Firms; International Business

- Browse content in F3 - International Finance

- F30 - General

- F31 - Foreign Exchange

- F32 - Current Account Adjustment; Short-Term Capital Movements

- F34 - International Lending and Debt Problems

- F35 - Foreign Aid

- F36 - Financial Aspects of Economic Integration

- Browse content in F4 - Macroeconomic Aspects of International Trade and Finance

- F40 - General

- F41 - Open Economy Macroeconomics

- F42 - International Policy Coordination and Transmission

- F43 - Economic Growth of Open Economies

- F44 - International Business Cycles

- Browse content in F5 - International Relations, National Security, and International Political Economy

- F50 - General

- F51 - International Conflicts; Negotiations; Sanctions

- F52 - National Security; Economic Nationalism

- F55 - International Institutional Arrangements

- Browse content in F6 - Economic Impacts of Globalization

- F60 - General

- F61 - Microeconomic Impacts

- F63 - Economic Development

- Browse content in G - Financial Economics

- Browse content in G0 - General

- G00 - General

- G01 - Financial Crises

- G02 - Behavioral Finance: Underlying Principles

- Browse content in G1 - General Financial Markets

- G10 - General

- G11 - Portfolio Choice; Investment Decisions

- G12 - Asset Pricing; Trading volume; Bond Interest Rates

- G14 - Information and Market Efficiency; Event Studies; Insider Trading

- G15 - International Financial Markets

- G18 - Government Policy and Regulation

- Browse content in G2 - Financial Institutions and Services

- G20 - General

- G21 - Banks; Depository Institutions; Micro Finance Institutions; Mortgages

- G22 - Insurance; Insurance Companies; Actuarial Studies

- G23 - Non-bank Financial Institutions; Financial Instruments; Institutional Investors

- G24 - Investment Banking; Venture Capital; Brokerage; Ratings and Ratings Agencies

- G28 - Government Policy and Regulation

- Browse content in G3 - Corporate Finance and Governance

- G30 - General

- G31 - Capital Budgeting; Fixed Investment and Inventory Studies; Capacity

- G32 - Financing Policy; Financial Risk and Risk Management; Capital and Ownership Structure; Value of Firms; Goodwill

- G33 - Bankruptcy; Liquidation

- G34 - Mergers; Acquisitions; Restructuring; Corporate Governance

- G38 - Government Policy and Regulation

- Browse content in G4 - Behavioral Finance

- G40 - General

- G41 - Role and Effects of Psychological, Emotional, Social, and Cognitive Factors on Decision Making in Financial Markets

- Browse content in G5 - Household Finance

- G50 - General

- G51 - Household Saving, Borrowing, Debt, and Wealth

- Browse content in H - Public Economics

- Browse content in H0 - General

- H00 - General

- Browse content in H1 - Structure and Scope of Government

- H10 - General

- H11 - Structure, Scope, and Performance of Government

- Browse content in H2 - Taxation, Subsidies, and Revenue

- H20 - General

- H21 - Efficiency; Optimal Taxation

- H22 - Incidence

- H23 - Externalities; Redistributive Effects; Environmental Taxes and Subsidies

- H24 - Personal Income and Other Nonbusiness Taxes and Subsidies; includes inheritance and gift taxes

- H25 - Business Taxes and Subsidies

- H26 - Tax Evasion and Avoidance

- Browse content in H3 - Fiscal Policies and Behavior of Economic Agents

- H31 - Household

- Browse content in H4 - Publicly Provided Goods

- H40 - General

- H41 - Public Goods

- H42 - Publicly Provided Private Goods

- H44 - Publicly Provided Goods: Mixed Markets

- Browse content in H5 - National Government Expenditures and Related Policies

- H50 - General

- H51 - Government Expenditures and Health

- H52 - Government Expenditures and Education

- H53 - Government Expenditures and Welfare Programs

- H54 - Infrastructures; Other Public Investment and Capital Stock

- H55 - Social Security and Public Pensions

- H56 - National Security and War

- H57 - Procurement

- Browse content in H6 - National Budget, Deficit, and Debt

- H63 - Debt; Debt Management; Sovereign Debt

- Browse content in H7 - State and Local Government; Intergovernmental Relations

- H70 - General

- H71 - State and Local Taxation, Subsidies, and Revenue

- H73 - Interjurisdictional Differentials and Their Effects

- H75 - State and Local Government: Health; Education; Welfare; Public Pensions

- H76 - State and Local Government: Other Expenditure Categories

- H77 - Intergovernmental Relations; Federalism; Secession

- Browse content in H8 - Miscellaneous Issues

- H81 - Governmental Loans; Loan Guarantees; Credits; Grants; Bailouts

- H83 - Public Administration; Public Sector Accounting and Audits

- H87 - International Fiscal Issues; International Public Goods

- Browse content in I - Health, Education, and Welfare

- Browse content in I0 - General

- I00 - General

- Browse content in I1 - Health

- I10 - General

- I11 - Analysis of Health Care Markets

- I12 - Health Behavior

- I13 - Health Insurance, Public and Private

- I14 - Health and Inequality

- I15 - Health and Economic Development

- I18 - Government Policy; Regulation; Public Health

- Browse content in I2 - Education and Research Institutions

- I20 - General

- I21 - Analysis of Education

- I22 - Educational Finance; Financial Aid

- I23 - Higher Education; Research Institutions

- I24 - Education and Inequality

- I25 - Education and Economic Development

- I26 - Returns to Education

- I28 - Government Policy

- Browse content in I3 - Welfare, Well-Being, and Poverty

- I30 - General

- I31 - General Welfare

- I32 - Measurement and Analysis of Poverty

- I38 - Government Policy; Provision and Effects of Welfare Programs

- Browse content in J - Labor and Demographic Economics

- Browse content in J0 - General

- J00 - General

- J01 - Labor Economics: General

- J08 - Labor Economics Policies

- Browse content in J1 - Demographic Economics

- J10 - General

- J12 - Marriage; Marital Dissolution; Family Structure; Domestic Abuse

- J13 - Fertility; Family Planning; Child Care; Children; Youth

- J14 - Economics of the Elderly; Economics of the Handicapped; Non-Labor Market Discrimination

- J15 - Economics of Minorities, Races, Indigenous Peoples, and Immigrants; Non-labor Discrimination

- J16 - Economics of Gender; Non-labor Discrimination

- J18 - Public Policy

- Browse content in J2 - Demand and Supply of Labor

- J20 - General

- J21 - Labor Force and Employment, Size, and Structure

- J22 - Time Allocation and Labor Supply

- J23 - Labor Demand

- J24 - Human Capital; Skills; Occupational Choice; Labor Productivity

- Browse content in J3 - Wages, Compensation, and Labor Costs

- J30 - General

- J31 - Wage Level and Structure; Wage Differentials

- J33 - Compensation Packages; Payment Methods

- J38 - Public Policy

- Browse content in J4 - Particular Labor Markets

- J40 - General

- J42 - Monopsony; Segmented Labor Markets

- J44 - Professional Labor Markets; Occupational Licensing

- J45 - Public Sector Labor Markets

- J48 - Public Policy

- J49 - Other

- Browse content in J5 - Labor-Management Relations, Trade Unions, and Collective Bargaining

- J50 - General

- J51 - Trade Unions: Objectives, Structure, and Effects

- J53 - Labor-Management Relations; Industrial Jurisprudence

- Browse content in J6 - Mobility, Unemployment, Vacancies, and Immigrant Workers

- J60 - General

- J61 - Geographic Labor Mobility; Immigrant Workers

- J62 - Job, Occupational, and Intergenerational Mobility

- J63 - Turnover; Vacancies; Layoffs

- J64 - Unemployment: Models, Duration, Incidence, and Job Search

- J65 - Unemployment Insurance; Severance Pay; Plant Closings

- J68 - Public Policy

- Browse content in J7 - Labor Discrimination

- J71 - Discrimination

- J78 - Public Policy

- Browse content in J8 - Labor Standards: National and International

- J81 - Working Conditions

- J88 - Public Policy

- Browse content in K - Law and Economics

- Browse content in K0 - General

- K00 - General

- Browse content in K1 - Basic Areas of Law

- K14 - Criminal Law

- K2 - Regulation and Business Law

- Browse content in K3 - Other Substantive Areas of Law

- K31 - Labor Law

- Browse content in K4 - Legal Procedure, the Legal System, and Illegal Behavior

- K40 - General

- K41 - Litigation Process

- K42 - Illegal Behavior and the Enforcement of Law

- Browse content in L - Industrial Organization

- Browse content in L0 - General

- L00 - General

- Browse content in L1 - Market Structure, Firm Strategy, and Market Performance

- L10 - General

- L11 - Production, Pricing, and Market Structure; Size Distribution of Firms

- L13 - Oligopoly and Other Imperfect Markets

- L14 - Transactional Relationships; Contracts and Reputation; Networks

- L15 - Information and Product Quality; Standardization and Compatibility

- L16 - Industrial Organization and Macroeconomics: Industrial Structure and Structural Change; Industrial Price Indices

- L19 - Other

- Browse content in L2 - Firm Objectives, Organization, and Behavior

- L21 - Business Objectives of the Firm

- L22 - Firm Organization and Market Structure

- L23 - Organization of Production

- L24 - Contracting Out; Joint Ventures; Technology Licensing

- L25 - Firm Performance: Size, Diversification, and Scope

- L26 - Entrepreneurship

- Browse content in L3 - Nonprofit Organizations and Public Enterprise

- L33 - Comparison of Public and Private Enterprises and Nonprofit Institutions; Privatization; Contracting Out

- Browse content in L4 - Antitrust Issues and Policies

- L40 - General

- L41 - Monopolization; Horizontal Anticompetitive Practices

- L42 - Vertical Restraints; Resale Price Maintenance; Quantity Discounts

- Browse content in L5 - Regulation and Industrial Policy

- L50 - General

- L51 - Economics of Regulation

- Browse content in L6 - Industry Studies: Manufacturing

- L60 - General

- L62 - Automobiles; Other Transportation Equipment; Related Parts and Equipment

- L63 - Microelectronics; Computers; Communications Equipment

- L66 - Food; Beverages; Cosmetics; Tobacco; Wine and Spirits

- Browse content in L7 - Industry Studies: Primary Products and Construction

- L71 - Mining, Extraction, and Refining: Hydrocarbon Fuels

- L73 - Forest Products

- Browse content in L8 - Industry Studies: Services

- L81 - Retail and Wholesale Trade; e-Commerce

- L83 - Sports; Gambling; Recreation; Tourism

- L84 - Personal, Professional, and Business Services

- L86 - Information and Internet Services; Computer Software

- Browse content in L9 - Industry Studies: Transportation and Utilities

- L91 - Transportation: General

- L93 - Air Transportation

- L94 - Electric Utilities

- Browse content in M - Business Administration and Business Economics; Marketing; Accounting; Personnel Economics

- Browse content in M1 - Business Administration

- M11 - Production Management

- M12 - Personnel Management; Executives; Executive Compensation

- M14 - Corporate Culture; Social Responsibility

- Browse content in M2 - Business Economics

- M21 - Business Economics

- Browse content in M3 - Marketing and Advertising

- M31 - Marketing

- M37 - Advertising

- Browse content in M4 - Accounting and Auditing

- M42 - Auditing

- M48 - Government Policy and Regulation

- Browse content in M5 - Personnel Economics

- M50 - General

- M51 - Firm Employment Decisions; Promotions

- M52 - Compensation and Compensation Methods and Their Effects

- M53 - Training

- M54 - Labor Management

- Browse content in N - Economic History

- Browse content in N0 - General

- N00 - General

- N01 - Development of the Discipline: Historiographical; Sources and Methods

- Browse content in N1 - Macroeconomics and Monetary Economics; Industrial Structure; Growth; Fluctuations

- N10 - General, International, or Comparative

- N11 - U.S.; Canada: Pre-1913

- N12 - U.S.; Canada: 1913-

- N13 - Europe: Pre-1913

- N17 - Africa; Oceania

- Browse content in N2 - Financial Markets and Institutions

- N20 - General, International, or Comparative

- N22 - U.S.; Canada: 1913-

- N23 - Europe: Pre-1913

- Browse content in N3 - Labor and Consumers, Demography, Education, Health, Welfare, Income, Wealth, Religion, and Philanthropy

- N30 - General, International, or Comparative

- N31 - U.S.; Canada: Pre-1913

- N32 - U.S.; Canada: 1913-

- N33 - Europe: Pre-1913

- N34 - Europe: 1913-

- N36 - Latin America; Caribbean

- N37 - Africa; Oceania

- Browse content in N4 - Government, War, Law, International Relations, and Regulation

- N40 - General, International, or Comparative

- N41 - U.S.; Canada: Pre-1913

- N42 - U.S.; Canada: 1913-

- N43 - Europe: Pre-1913

- N44 - Europe: 1913-

- N45 - Asia including Middle East

- N47 - Africa; Oceania

- Browse content in N5 - Agriculture, Natural Resources, Environment, and Extractive Industries

- N50 - General, International, or Comparative

- N51 - U.S.; Canada: Pre-1913

- Browse content in N6 - Manufacturing and Construction

- N63 - Europe: Pre-1913

- Browse content in N7 - Transport, Trade, Energy, Technology, and Other Services

- N71 - U.S.; Canada: Pre-1913

- Browse content in N8 - Micro-Business History

- N82 - U.S.; Canada: 1913-

- Browse content in N9 - Regional and Urban History

- N91 - U.S.; Canada: Pre-1913

- N92 - U.S.; Canada: 1913-

- N93 - Europe: Pre-1913

- N94 - Europe: 1913-

- Browse content in O - Economic Development, Innovation, Technological Change, and Growth

- Browse content in O1 - Economic Development

- O10 - General

- O11 - Macroeconomic Analyses of Economic Development

- O12 - Microeconomic Analyses of Economic Development

- O13 - Agriculture; Natural Resources; Energy; Environment; Other Primary Products

- O14 - Industrialization; Manufacturing and Service Industries; Choice of Technology

- O15 - Human Resources; Human Development; Income Distribution; Migration

- O16 - Financial Markets; Saving and Capital Investment; Corporate Finance and Governance

- O17 - Formal and Informal Sectors; Shadow Economy; Institutional Arrangements

- O18 - Urban, Rural, Regional, and Transportation Analysis; Housing; Infrastructure

- O19 - International Linkages to Development; Role of International Organizations

- Browse content in O2 - Development Planning and Policy

- O23 - Fiscal and Monetary Policy in Development

- O25 - Industrial Policy

- Browse content in O3 - Innovation; Research and Development; Technological Change; Intellectual Property Rights

- O30 - General

- O31 - Innovation and Invention: Processes and Incentives

- O32 - Management of Technological Innovation and R&D

- O33 - Technological Change: Choices and Consequences; Diffusion Processes

- O34 - Intellectual Property and Intellectual Capital

- O38 - Government Policy

- Browse content in O4 - Economic Growth and Aggregate Productivity

- O40 - General

- O41 - One, Two, and Multisector Growth Models

- O43 - Institutions and Growth

- O44 - Environment and Growth

- O47 - Empirical Studies of Economic Growth; Aggregate Productivity; Cross-Country Output Convergence

- Browse content in O5 - Economywide Country Studies

- O52 - Europe

- O53 - Asia including Middle East

- O55 - Africa

- Browse content in P - Economic Systems

- Browse content in P0 - General

- P00 - General

- Browse content in P1 - Capitalist Systems

- P10 - General

- P16 - Political Economy

- P17 - Performance and Prospects

- P18 - Energy: Environment

- Browse content in P2 - Socialist Systems and Transitional Economies

- P26 - Political Economy; Property Rights

- Browse content in P3 - Socialist Institutions and Their Transitions

- P37 - Legal Institutions; Illegal Behavior

- Browse content in P4 - Other Economic Systems

- P48 - Political Economy; Legal Institutions; Property Rights; Natural Resources; Energy; Environment; Regional Studies

- Browse content in P5 - Comparative Economic Systems

- P51 - Comparative Analysis of Economic Systems

- Browse content in Q - Agricultural and Natural Resource Economics; Environmental and Ecological Economics

- Browse content in Q1 - Agriculture

- Q10 - General

- Q12 - Micro Analysis of Farm Firms, Farm Households, and Farm Input Markets

- Q13 - Agricultural Markets and Marketing; Cooperatives; Agribusiness